In this post we are going to explain some extra activities and knowledge learned in the different subjects of Payload, Unmanned Aircraft and System Integration.

Payload

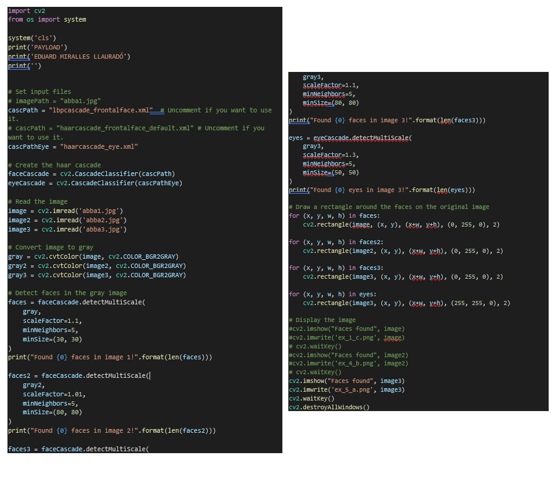

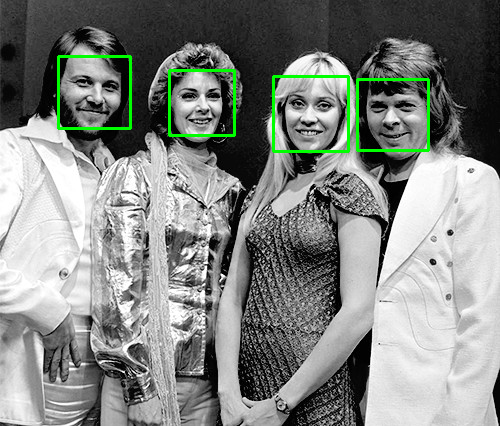

In this subject we have learned about image processing. We have studied how to process images to make orthophotos, apply masks to detect objects and even deep learning codes have been implemented to detect objects with post-processing and live processing. Of all these applications, the most useful for this project is object detection, both in post-processing and live. In this subject we have implemented a python code where when passing images (which could be made with the Drone), it processes them and detects the faces. On the other hand, a code has also been developed where face detection is made live using the webcam, where if it is implemented in the Drone this webcam camera would become that of the Drone itself and therefore we would have face detection in direct.

These applications are very useful in applications such as search and rescue since by training the algorithms with image banks, using YOLO (You Only Look Once), we can detect any object we want. Here are the codes used and some images of face detection:

Unmanned Aircraft

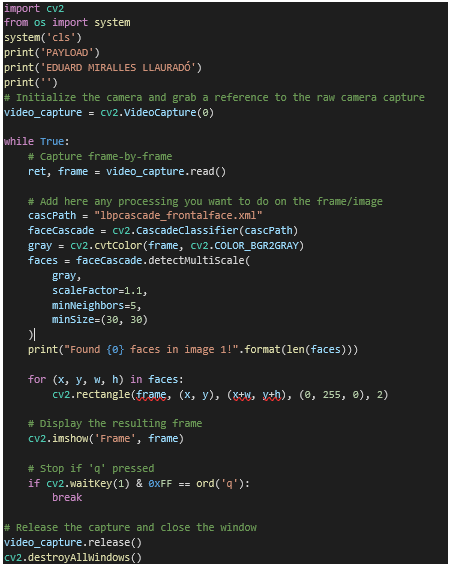

In this subject we learned to use a controler in order to connect some components and control them with Arduino. So, we thing that a possibility to implement in the Drone is to use an Ultrasonic Sensor in order to avoid collisions.

The functionality is simple, the goal is to implement a python code on the Raspberry Pi that will be integrated into the Drone and run during the flight. What this code does is connect with the Intel Real Sense camera and, using the technology of the different cameras, it calculates the distance to an object, showing it on the screen in our ground station.

This application is very useful for indoor flights since, as there is no GPS signal, measuring distances, orders can be given to the Drone that if it is at a minimum distance from an object to do a HOLD in order to avoid a collision . It is a way of navigating indoors.

In the next figure it can be seen this code:

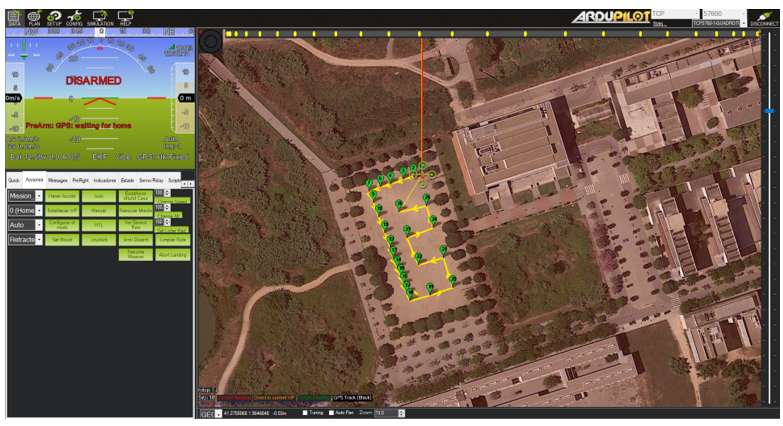

System Integration

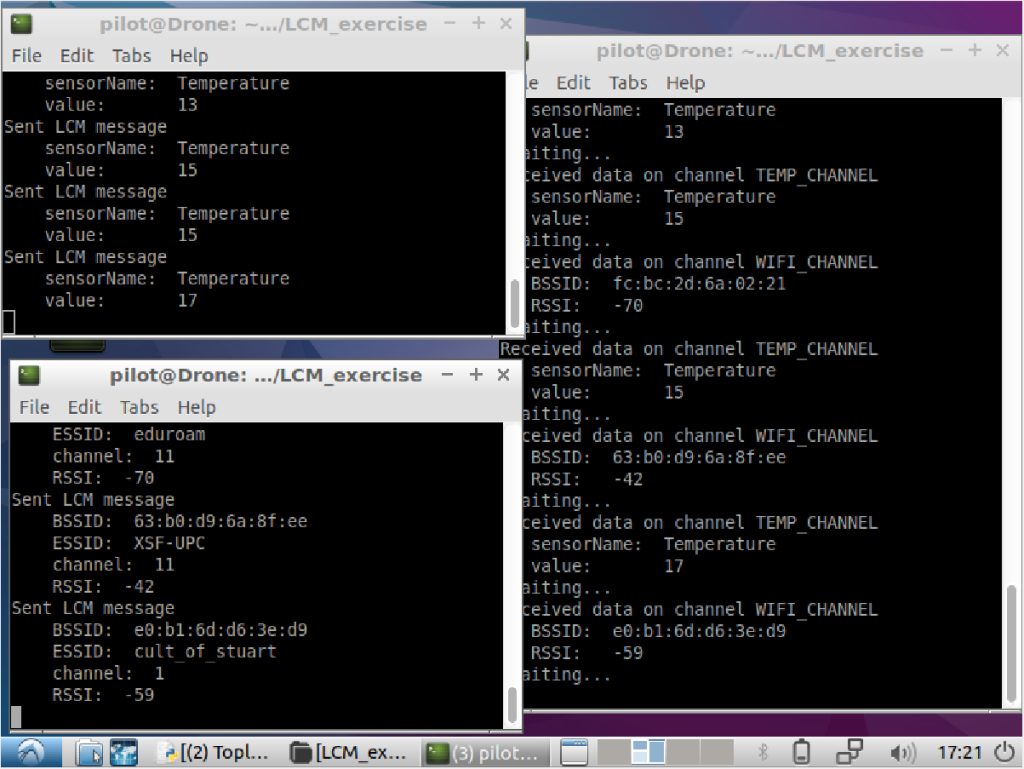

In this course we are learning different applications that can be integrated into the drone’s Raspberry Pi, as well as using Databases using MongoDB, tables using Pandas, etc. One of the applications that could be related to this project is the implementation of sending data from the Drone of a sensor and receiving it on the screen in our ground station. To do this, LCM, Lightweight Communications and Marshalling (LCM) communication has been used: message-passing system for interprocesscommunication for real-time systems.

With this type of communication, the Drone can send any type of data that it takes from a sensor, such as temperature, send it and that from our pc, subscribe to the channel where it is sending that data to be able to receive it in real time while the Drone is doing the Mission.

In the following figure you can see an exercise that was carried out where Temperature and Wi-fi data are being sent, and they are being received (right window) in real time:

0 Comments